Deterministic Control Planes for Agentic AI: Why Your Agent Orchestrator Needs Hard Boundaries

Probabilistic handoffs between agents work in demos. In production, they create cascading failures that no amount of retry logic can fix. Deterministic control planes give you the predictability of traditional orchestration with the flexibility of agentic systems.

The Orchestration Chaos Problem

Every team building multi-agent systems hits the same wall around month three of production deployment. The agents work beautifully in isolation. The orchestration layer routes tasks correctly 94% of the time. And that remaining 6%? It creates cascading failures that consume more engineering time than the entire system saves.

The root cause is almost always the same: probabilistic handoffs. Agent A decides it is "probably done" and passes to Agent B. Agent B interprets the output with "reasonable confidence" and proceeds. Multiply this across four or five agents in a chain, and your system reliability drops exponentially. A 94% success rate per handoff across five agents gives you a 73% end-to-end success rate. That is not production-grade. That is a demo.

The solution is not better prompting or more sophisticated retry logic. It is architectural: deterministic control planes that enforce hard boundaries on what agents can and cannot do, when handoffs occur, and what constitutes a valid state transition.

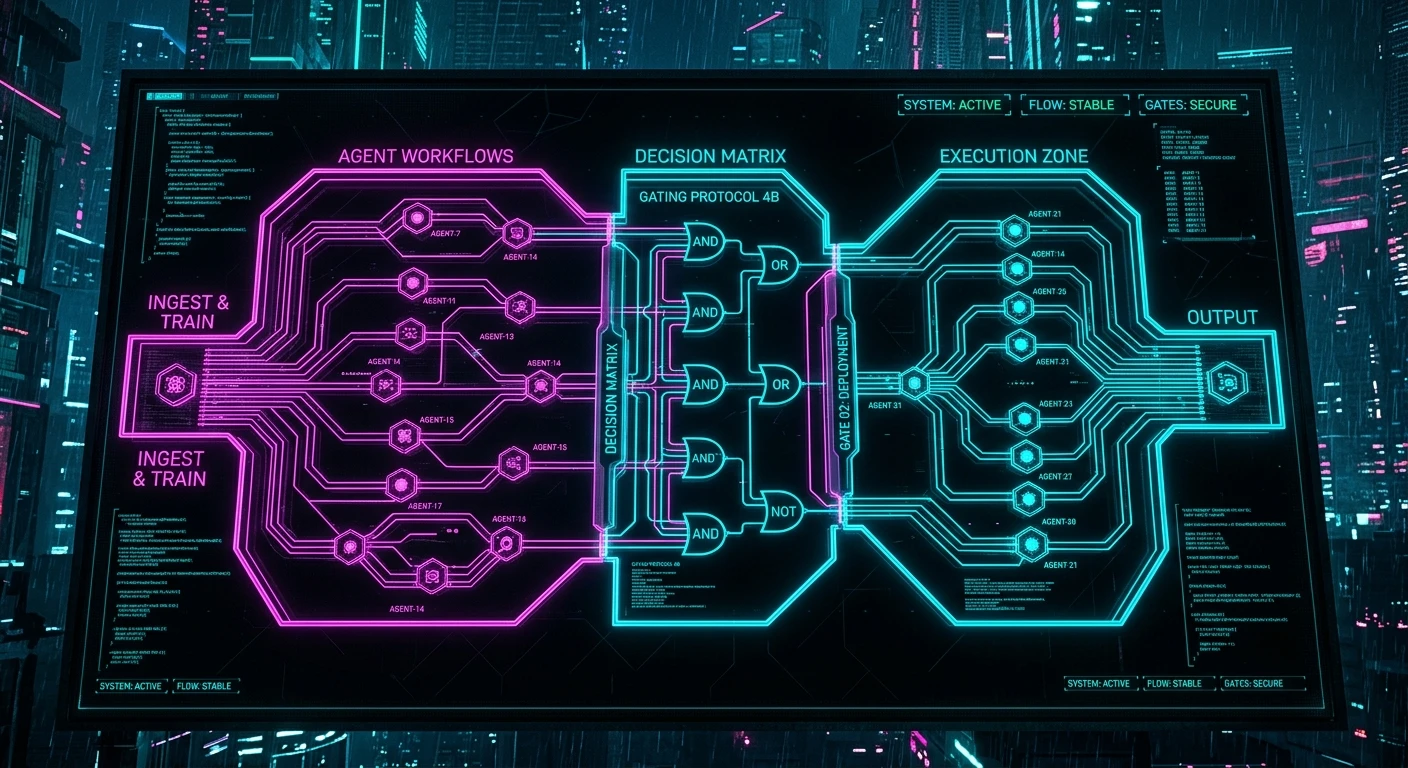

What a Deterministic Control Plane Actually Looks Like

A control plane for agentic AI borrows heavily from networking concepts. In network engineering, the control plane decides where traffic goes while the data plane actually moves the packets. In agentic systems, the control plane decides which agent executes, validates preconditions and postconditions, and manages state transitions -- while the agents themselves handle the actual cognitive work.

The critical distinction from traditional orchestration: the agents retain autonomy within their bounded context. They can reason, iterate, and make decisions. But they cannot unilaterally decide to hand off, skip steps, or modify the workflow. That authority lives in the control plane.

Concretely, this means:

State machines for workflow progression. Every multi-agent workflow is modeled as an explicit state machine with defined transitions. An agent cannot move the workflow to the next state -- it can only signal completion, and the control plane validates that signal against postconditions before transitioning.

Schema-validated handoff payloads. When Agent A completes and passes output to Agent B, that output must conform to a strict schema. Not "the LLM produced something that looks right" -- actual schema validation with type checking, required fields, and constraint satisfaction. If validation fails, the workflow enters an error state with defined recovery paths.

Capability boundaries. Each agent has an explicit capability manifest: what tools it can call, what data it can access, what actions it can take. The control plane enforces these boundaries at runtime, not through prompt instructions that the model might ignore.

The Architecture Pattern

The pattern we deploy in production systems has three layers:

Layer 1: The Workflow Engine. A traditional workflow engine (Temporal, Step Functions, or custom) that models the macro-level process. This is boring, deterministic infrastructure. It knows nothing about AI -- it just manages state transitions, retries, timeouts, and compensation logic.

Layer 2: The Agent Runtime. Each agent runs in an isolated runtime with defined inputs, outputs, and resource boundaries. The runtime handles prompt assembly, tool execution, and output parsing. Crucially, it exposes a simple interface to the workflow engine: accept input, produce output, or signal failure.

Layer 3: The Validation Gateway. Between every agent execution, a validation layer checks postconditions. Did the agent produce valid output? Does the output satisfy the business rules for this transition? Are there any safety or compliance constraints that would block progression? This layer is pure code -- no LLM reasoning, no probabilistic decisions.

This mirrors what we discussed in multi-agent orchestration patterns that survive real traffic, but pushes further into the deterministic guarantees that enterprise systems demand.

Why Probabilistic Handoffs Fail

The allure of probabilistic handoffs is flexibility. "Let the agents figure out who should handle this." In practice, this creates three failure modes:

Responsibility gaps. When no agent confidently claims a task, it falls through the cracks. In a customer service system, this means a query goes unanswered. In a document processing pipeline, it means a record is silently dropped.

Responsibility conflicts. When multiple agents claim the same task, you get duplicate processing, inconsistent outputs, and race conditions that are nightmarishly difficult to debug.

Context degradation. Each probabilistic handoff loses context. Agent B receives Agent A's output but not its reasoning. By the time you reach Agent D, the system has lost so much context that it is effectively starting from scratch -- but with the false confidence of having "already processed" the input.

Deterministic control planes eliminate all three by making routing explicit, boundaries enforced, and context propagation structured.

Implementing Hard Boundaries Without Killing Flexibility

The objection is always the same: "But we need flexibility. The whole point of agents is that they adapt." This conflates two types of flexibility:

Intra-agent flexibility -- how an agent reasons within its bounded task -- should remain unconstrained. Let the LLM think, iterate, use tools, and explore. This is where agentic AI delivers value.

Inter-agent flexibility -- how the system routes between agents and manages workflow state -- should be deterministic. This is where reliability lives.

The analogy is microservices. Each service can use whatever internal architecture it wants -- event sourcing, CQRS, simple CRUD. But the contracts between services are strict. API schemas are versioned. Service meshes enforce communication patterns. The internal flexibility of each service is enabled by the external rigidity of the system architecture.

As the principles of AI guardrails in production demonstrate, safety and performance are not opposing forces when you put the constraints at the right architectural layer.

State Validation in Practice

The validation gateway is where most teams under-invest. A typical implementation checks:

Structural validity. Does the output match the expected schema? This catches the 15-20% of cases where an LLM produces malformed output despite instructions.

Semantic validity. Does the output make sense given the input? A summarization agent that produces an output longer than its input has failed semantically even if structurally valid.

Business rule compliance. Does the output violate any domain constraints? A pricing agent that quotes below cost, a classification agent that assigns a deprecated category, a routing agent that sends a VIP customer to the wrong queue.

Safety constraints. PII in outputs that should be anonymized. Confidential data leaking between tenant boundaries. Actions that exceed the agent's authorization level.

Each check is a pure function. No LLM-as-judge patterns for critical validation -- that just adds another probabilistic layer to a system that needs determinism. Save LLM-as-judge for quality scoring and continuous improvement, not for production gatekeeping.

The Observability Advantage

Deterministic control planes have a massive secondary benefit: observability becomes trivial. When every state transition is explicit and every handoff is validated, you get a complete audit trail for free. AI observability stops being a bolt-on concern and becomes an emergent property of the architecture.

You can answer questions that probabilistic systems make nearly impossible:

- Exactly which agent processed this input?

- What was the validated state at each transition?

- Where did this workflow diverge from the expected path?

- What postcondition failed and why?

For enterprises operating under audit trail and explainability requirements, this is not a nice-to-have. It is the difference between a system you can defend to regulators and one you cannot.

Migration Path From Probabilistic to Deterministic

If you already have a multi-agent system with probabilistic routing, the migration path is incremental:

Phase 1: Instrument. Add logging to every handoff. Measure success rates, failure modes, and context loss at each transition point. You cannot fix what you cannot see.

Phase 2: Schema enforcement. Define output schemas for each agent. Start in observation mode -- log violations but do not block. Once violation rates are understood, switch to enforcement.

Phase 3: State machine extraction. Map your actual workflow patterns (from logs) into explicit state machines. You will likely discover that 90% of executions follow 3-4 common paths. Encode those paths deterministically.

Phase 4: Control plane introduction. Replace probabilistic routing with explicit state machine transitions. Keep agents internally flexible but externally bounded.

The entire migration can happen without downtime using feature flag patterns for AI model rollout -- run probabilistic and deterministic paths in parallel, compare results, and shift traffic gradually.

The Hard Truth About Production AI

The AI industry has a reliability problem that no amount of model improvement will solve. GPT-5 will not make probabilistic handoffs reliable because the issue is architectural, not capability-based. A more capable model making probabilistic decisions is still making probabilistic decisions.

Production systems need guarantees. Not "usually works" -- guarantees. The teams shipping reliable multi-agent systems to production have all converged on the same insight: constrain the system at the orchestration layer, not the agent layer. Let agents be smart within boundaries. Make the boundaries dumb and rigid.

This is not a limitation of agentic AI. It is the enabler. Hard boundaries are what allow you to trust agents with increasingly complex tasks -- because you know exactly what they can and cannot do, and you can prove it to your stakeholders, your compliance team, and your customers.

Founder & Principal Architect

Ready to explore AI for your organization?

Schedule a free consultation to discuss your AI goals and challenges.

Book Free Consultation